Types of AI / How Do We Measure Machine Learning?

When discussed at large, we rarely talk about the various types of artificial intelligence. We talk about specific products, or direct applications, but we rarely view the wider scale by which we can compare AI.

Yet, with our machine overlords machine learning models getting smarter and smarter, there’s arguably a need to see where we’ve come from, where we are and, of course, where we’re going.

We wanted to take a moment to go through these types of artificial intelligence, because it’s not actually such an easy question to answer 😉

The Challenge in Defining Types of AI & Machine Learning

Since there are many parameters to AI, it’s understandable that there’s not necessarily one singular measuring stick. However, there are a few common means AI researchers use. What’s more, we can arguably simplify them as two different axis: one for how broadly applicable AI is, and one for the actual functionality, as a more technical point of view.

- For the former, we have 3 main levels of AI, used to measure the overall capabilities in relation to tasks and their ability to learn.

- On the other axis, we have 4 categories of AI, based on their direct technical capabilities in some key areas.

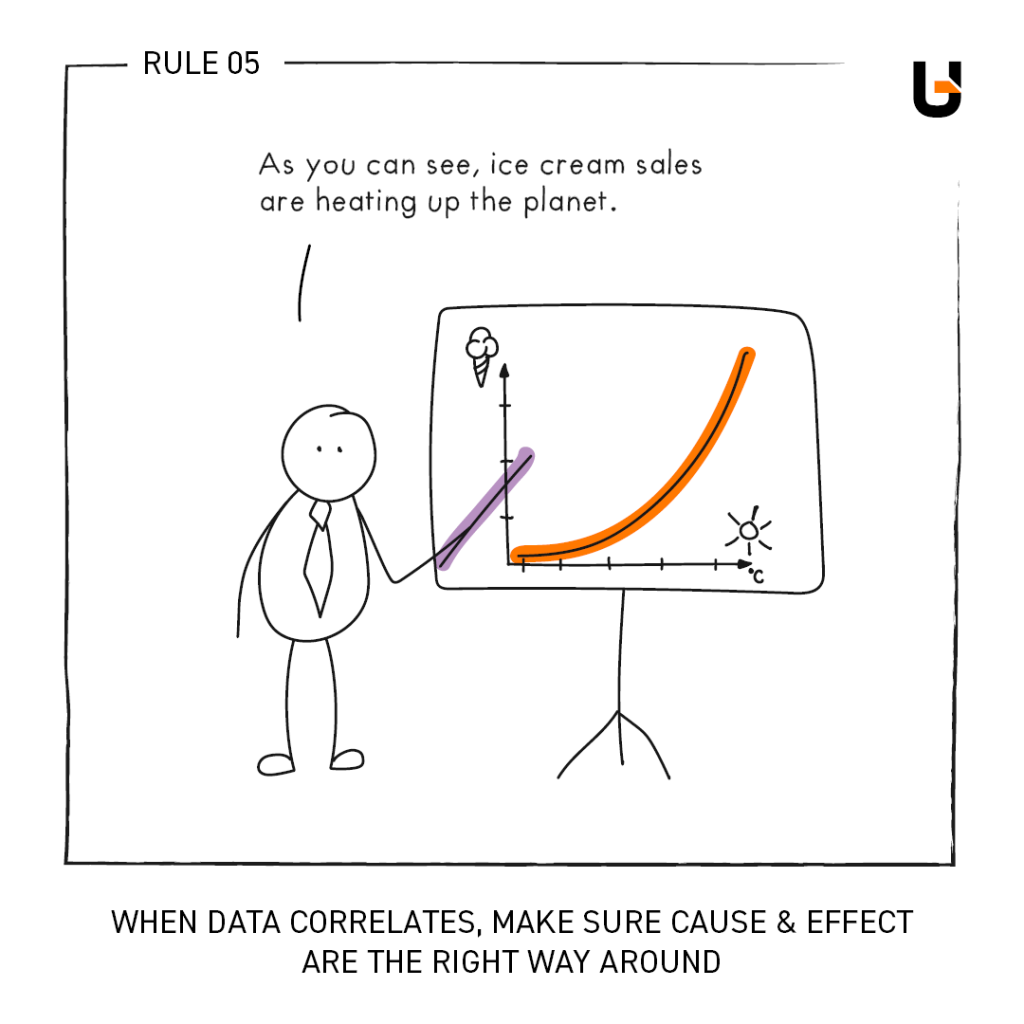

On top of that, AI can also be categorized by purpose, from generative AI, or image recognition AI or even self-driving cars. However, we’re not talking about purpose here is at it’s not a great way of measuring AI machines. We can’t compare one to the other, so it’s not a useful scale.

The same goes for the technical aspects of machine learning models – we leave that to developers, as certain options weight themselves better to certain situations and tasks.

3 Main Levels of AI: Categorized by Capabilities

The first primary way to group artificial intelligence is by its overall range of capabilities. How extensive it may be in manageable tasks is, naturally, a clear way to measure different types of AI at a level of comprehensible differentiation. Currently, AI researchers use three levels for this.

Narrow or Weak Artificial Intelligence

This category refers to AI that is designed to do a specific task. Most present day AI systems fall under narrow AI. Even if they use neural networks, deep learning or other advanced means, they’re still preprogrammed to complete specific tasks. A sales forecast artificial intelligence, for example, can only do one thing: forecast sales.

General or Strong Artificial Intelligence

These AI systems can learn from data and their own experiences, applying data in ways that it might not have been initially asked to. In other words, a strong AI can learn and adapt similarly to the human mind.

Whether or not general AI exists right now is of some debate amongst AI researchers. A lot of work is being done to progress AI to this state, but the common consensus is that we are some ways away and ongoing future actions are needed.

Artificial Superintelligence

An Artificial Superintelligence is the hypothetical level in which an AI system is able to outperform a human being in nearly every possible intellectual task. This state of AI – which currently doesn’t exist outside of science fiction books – is also regarded as self-awareness AI, as experts generally argue the two are different sides of the same coin. In order to exceed human performance, such artificial intelligence types must be self-aware, in order to remove preprogrammed limitations that restrict their capabilities.

4 Types of AI: Categorized by Functionality

The other main way to categorize artificial intelligence is to look under the hood and see how the inner workings are actually structured. Is it actually learning or just giving a scripted output? Is it able to learn from its own actions or limited to previous training data?

Here, we have four different categories of artificial intelligence and machine learning models. Similar to the previous categories, we can put them in a respective order of (assumed) progression.

Reactive Machines

This is where “AI” as we know it really started. The first, such as IBM’s infamous chess-beating Deep Blue, which defeated world champion Garry Kasparov in 1997, were essentially based on brute force tactics and automation. These AI were not “thinking” in any sense and can hardly be called AI by today’s definitions.

Such solutions simply map out every possible outcome and simply made decisions based on a clearly designated goal: to win. They also did not “learn” after each game, rather they simply started the process anew.

In fact, chess is a perfect example of where reactive machines work best. The scenario is always predefined: the board is always 8×8 and the pieces never change between games. Sources smarter than us tell us there are 10 to the power of 40 potential moves in any given game. While enough hardware could make this a simple logic problem, developers have well… developed chess algorithms to beat human thinking:

- Min-max searching uses a scoring system to measure the ‘state’ of the gameboard, examining the immediate upcoming potential moves to maximize its own score whilst minimizing the opponents score (and thus board position).

- Opening moves can also be preprogrammed, so the machine simply “knows” which move is the best option based on the opponent’s initial strategy.

- More advanced algorithms can propagate trees numerous moves ahead (but not the full game), apply heuristics and other factors to determine the best course of action.

A sufficient reactive AI can map these out logically to determine its best chances of success.

In fact, limited memory vs scaling complexity of the only real hurdle a reactive machine has in such tasks. After Deep Blue, later “AI” moved to the likes of Go, which has a 19×19 board and around 10 to the power of 360 possible moves.

How Might They Be Used?

Recommendation engines, for example, are the perfect example of reactive machines. They utilize the data at hand to solve a very specific task. If these results are fed back into the engine, it can improve its results, but it is still essentially solving a very specific, defined problem within a scope of set parameters – it’s great at this task, but don’t expect it to adapt to something new with ease.

At its simplest, sales forecasting can also be considered a reactive AI. We feed it historical data, then ask it to make predictions. What we get back is certainly very useful, but it’s essentially mathematical equations of probability on a finite data set. Inventory, orders, bookings and investments are all ideal scenarios here – there’s enough historical data for general AI to predict and stabilize for a direct business benefit.

Limited Memory AI

As the name implies, these AI systems have the ability to learn and improve future decisions. Limited memory machines can be trained on data sets and use these past experiences to influence it’s next decisions. When we think about AI in today’s most capable forms, we are looking at limited memory AI models.

However, we nonetheless refer to these as limited memory AI as the degree to which they can remember past data is still restricted. Firstly, the data that it collects is determined by its programming – it is not self-aware so it cannot think outside of this scope.

Naturally, as hardware and our understanding of artificial intelligence, reinforcement learning and other ways to train AI models developers, these barriers are becoming easier to overcome.

And at the very forefront of this category, we have neural networks, deep learning and other advanced AI. While they are still fed specific data, such systems can think at a much deeper level and draw greater insights compared to the simple logic tree or probability mapping of reactive machines.

How Might They Be Used?

Today’s most advanced recommendation engines fall under this category. Rather than simply recommending like-for-like products from the same category, they learn from real data for a greater chance of success. In this way, they can also get better overtime, as the results of previous actions are used as new training data.

Chatbots and marketing personalization systems, likewise, can also fall in this segment. They retain input from previous interactions with specific people, adapting in a limited yet meaningful way to generate the best results.

Thanks to large language models and natural language processing, they can understand rudimentary context. For example, it’s a well-known phenomenon that waiting staff that repeat their customers exact word choice often get a bigger tip. This verbal mimicry or parrot effect can also be simulated by artificial intelligence – they can identify unique trends amongst users and copy their language for better results.

Theory of Mind AI

The term Theory of Mind comes directly from psychology, and refers to a human being’s ability to understand other people and apply a mental state to them. In other words, we are aware, at some level, of other people’s minds, personalities and emotions. We look for changes in the choice of words, or the tone of voice, that indicate a change in their emotional stance.

This includes, for example, a lot of factors that we take for granted in human intelligence:

- The ability to recognize emotions in others, and act according to this “data”.

- The ability to change actions based on indirect knowledge. Humans have street smarts for example… we know which areas of town to avoid at night without data from our local police departments.

- The ability to recognize intent and needs without predefined goals or objectives.

Most AI researchers say that we are still some years away from Theory of Mind AI, although there are many areas of research that will support such types of AI.

For example, natural language processing will surely help such an AI to understand emotion, but it alone won’t pick up the nuances in how we speak or the emotions behind them (at least not yet). When you get angry at your virtual home assistant, it doesn’t understand and responds normally. Likewise, image recognition and facial recognition will help intelligent machines to understand the world and who it is speaking to, but it will take a lot more work before it can pick up the microexpressions of a human face – something we are able to do subconsciously and take for granted.

How Might They Be Used?

Once artificial intelligence is able to understand emotions, their ability for direct interaction with people increases immensely. First of all, devices like the aforementioned home assistants, as well as other voice operated programs, will simply become better. We’ve all lost our patience when dealing with an automated call center, right?

On the other hand, machine learning with Theory of Mind also opens up more possibilities in customer service roles. For now, we can only guess, but this seems like a very wide area of opportunity.

Self-Aware AI

The hypothetical “end-goal” of AI development, self-aware AI are hypothetical machines that can understand its surroundings and state, thinking for itself and determine it’s actions outside of predefined objectives or scripts.

This is also the state of AI that many people are afraid of.

At this stage, there has been no such breakthrough in artificial intelligence systems that can replicate or surpass human intelligence. Likewise, the hardware requirements alone mean that this type of AI, if it’s even possible, is a long shot at best. Despite all the recent hype, self-awareness is not expected to happen anytime soon.

How Might They Be Used?

Well, many experts believe that self-aware AI shouldn’t be used. This area is still open to hot discussions on ethics and safety.

For example, there have been many attempts to create rules for AI to self-govern their behavior, but nearly all of them can be undone creatively. And if we implement them on machines with the ability to think creatively, this could be a big problem. Isaac Asimov famously wrote the Three Laws of Robotics, but even he later added a “Zeroth” law to supersede them.

In short, implementing complete ethical control in artificial intelligence is still fully theoretical in nature. So let’s just say direct business applications aren’t the most critical topic right now 🙂

How Does Human Intelligence Compare?

So is the typical person a weak AI or a strong AI? And where do we fit in the 4 types of AI? The answer probably won’t surprise you:

- If we were to look at the 3 levels of AI, then the human mind is a superintelligence.

- In terms of the 4 types of AI, the human mind is self-aware.

Because tasks are not preprogrammed, and we are able to utilize data in new and creative ways, read the emotional state of others and understand its own surroundings, situation and existence, human beings are able to solve problems that current artificial intelligence types cannot.

Unfortunately, we also don’t know how the human brain works as a piece of hardware. Neural networks, for example, represent our current, closest reproduceable approximation.

Yet machine learning does have one key advantage, even when talking about narrow AI or reactive machines. They can think at larger volumes than a human can. Our minds are limited, where as we can always add more processors and memory to an AI. That’s why Deep Blue – something primitive by today’s standards – was able to beat a professional chess player. The human brain adapts to the challenge at hand, but it may always beat by more “primitive” AI if the latter is purpose built from the ground up for that one, singular task.

And this is why even “weak AI” thrive in business environments. Human employees are doing multiple things at once, and trying to fit around busy schedules. Narrow AI can be added to perform one task over and over, excelling in a specific field. Artificial intelligence based on data can always be fed more, whereas humans have an upper capacity at any given moment.

Artificial Intelligence paired with Human Intelligence: The Best of Both

We’ll save the wider implications of self-aware AI to greater minds than us. What we will say, however, is that artificial intelligence certainly has a place in everyday business. While a human brain can achieve many things, reactive machines and narrow AI – the most basic types of AI – can generally supersede human intelligence at a given, specified task.

Implemented well, this leaves human staff to work on more important areas, such as strategy, wider connections and those times when human input is needed. It maximises efficiency by letting both sides play to their strengths… for now 😊